project Betfair, part 8

Table of Contents

Introduction

Previously on project Betfair, we gave up on market making and decided to move on to a simpler Betfair systematic trading idea that seemed to work.

Today on project Betfair, we'll trade it live and find out it doesn't really work.

DAFBot on greyhounds: live trading

With the timezone bug now fixed, I was ready to let my bot actually trade some greyhound races for a while. I started trading with the following parameters:

- Favourite selection time: 180s

- Entry order time: 160s

- Exit time: 60s

- Bet size: £5

I decided to pick the favourite closer to the trade since I thought that if it were picked 5 minutes before the race starting, it could change and there was no real point in locking it in so long before the actual trade. The 60s exit point was mostly to give me a margin of safety to liquidate the position manually if something happened as well as in case of the market going in-play before the advertised time (there's no in-play trading in greyhound markets, so I'd carry any exposure into the game. At that point, it would become gambling and not trading).

Results

So how did it do?

Well, badly for now. Over the course of 25 races, in 4 hours, it lost £5.93: an average of -£0.24 per race with a standard deviation of £0.42. That was kind of sad and slightly suspicious too: according to the fairly sizeable dataset I had backtested this strategy on, its return was, on average, £0.07 with a standard deviation of £0.68.

I took the empirical CDF of the backtested distribution of returns, and according to it, getting a return of less than -£5.93 with 25 samples had a probability of 1.2%. So something clearly was going wrong, either with my backtest or with the simulation.

I scraped the stream market data out of the bot's logs and ran the backtest just on those races. Interestingly, it predicted a return of -£2.70. What was going wrong? I also scraped the traded runners, the entry and the exit prices from the logs and from the simulation to compare. They didn't match! A few times the runner that the bot was trading was different, but fairly often the entry odds that the bot got were lower (recall that the bot wants to be long the market, so entering at lower odds (higher price/implied probability) is worse for it). Interestingly, there was almost no mismatch in the exit price: the bot would manage to close its position in one trade without issues.

After looking at the price charts for a few races, I couldn't understand what was going wrong. The price wasn't swinging wildly to fool the bot into placing orders at lower odds: in fact, the price 160s before the race start was just different from what the bot was requesting.

Turns out, it was yet another dumb mistake: the bot was starting to trade 150s before the race start and pick the favourite at that point as well. Simulating what the bot did indeed brought the backtest's PnL (on just those markets) within a few pence from the realised PnL.

So that was weird: moving the start time by 10 seconds doubled the loss on that dataset (by bringing it from -£2.70 to -£5.93).

Greyhound capacity analysis

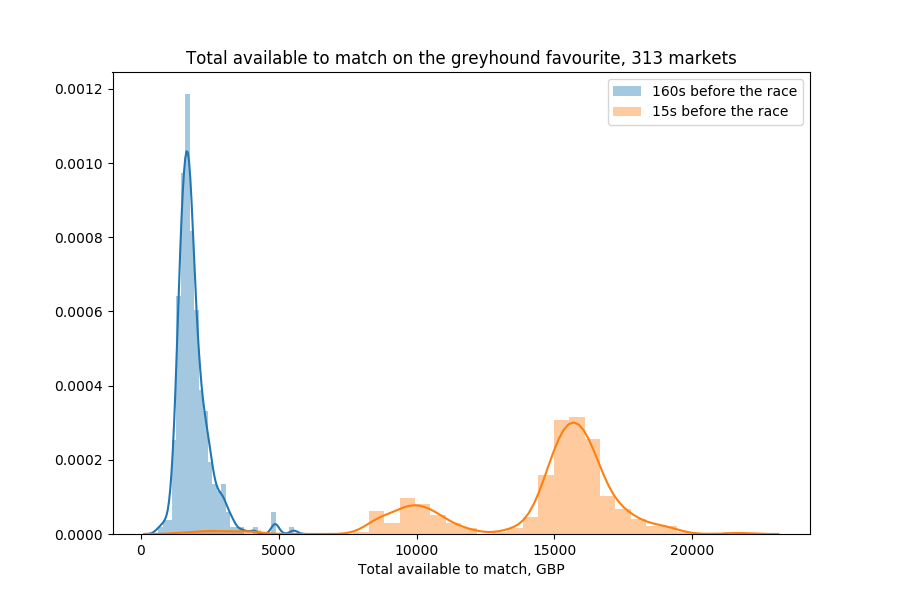

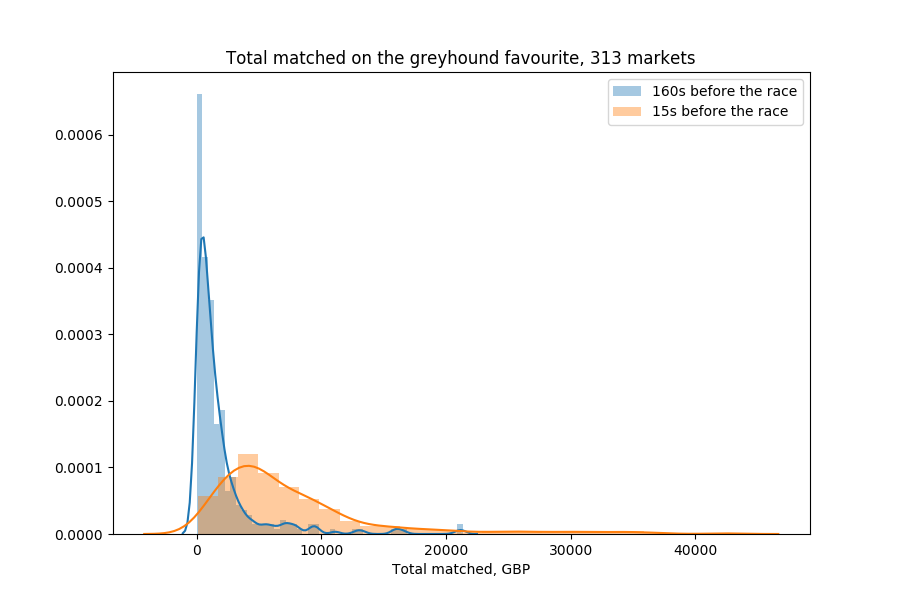

There was another issue, though: the greyhound markets aren't that liquid.

While there is about £10000-£15000 worth of bets available to match against in an average greyhound race, this also includes silly bets (like offering to lay at 1000.0).

To demonstrate this better, I added market impact to the backtester: even assuming that the entry bet gets matched 160s before the race (which becomes more difficult to believe at higher bet amounts, given that the average total matched volume by that point is around £100), closing the bet might not be possible to do completely at one odds level: what if there isn't enough capacity available at that level and we have to place another lay bet at higher odds?

Here's some code that simulates that:

def get_long_return(lines, init_cash, startT, endT, suspend_time,

market_impact=True, cross_spread=False):

# lines is a list of tuples: (timestamp, available_to_back,

# available_to_lay, total_traded)

# available to back/lay/traded are dictionaries

# of odds -> availablility at that level

# Get start/end availabilities

start = get_line_at(lines, suspend_time, startT)

end = get_line_at(lines, suspend_time, endT)

# Calculate the inventory

# If we cross the spread, use the best back odds, otherwise assume we get

# executed at the best lay

if cross_spread:

exp = init_cash * max(start[1])

else:

exp = init_cash * min(start[2])

# Simulate us trying to sell the contracts at the end

final_cash = 0.

for end_odds, end_avail in sorted(end[2].iteritems()):

# How much inventory were we able to offload at this odds level?

# If we don't simulate market impact, assume all of it.

mexp = min(end_odds * end_avail, exp) if market_impact else exp

exp -= mexp

# If we have managed to sell all contracts, return the final PnL.

final_cash += mexp / end_odds

if exp < 1e-6:

return final_cash - init_cash

# If we got to here, we've managed to knock out all price levels

# in the book.

return final_cash - init_cash

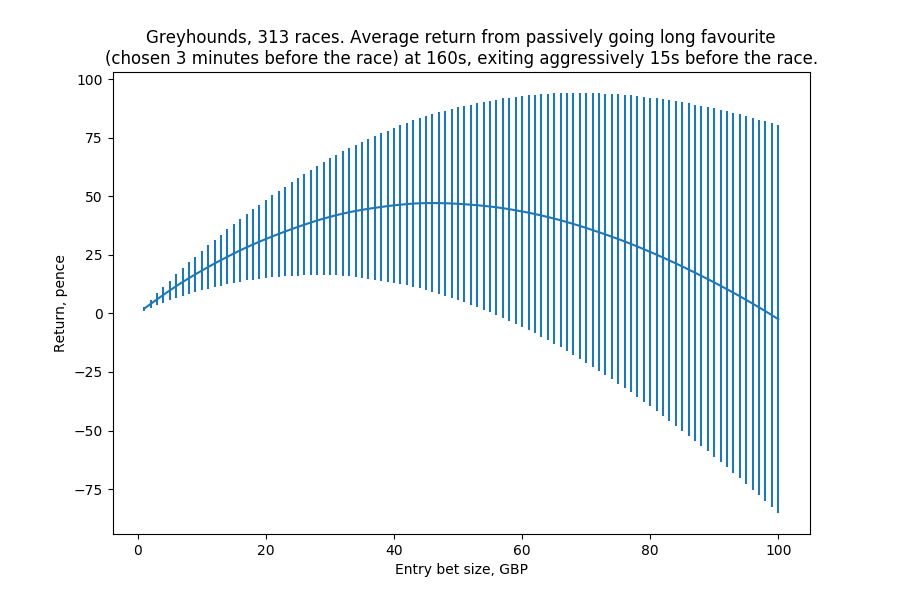

I then did several simulations of the strategy at different bet sizes.

Turns out, as we increase the bet size away from just £1, the PnL quickly decays (the vertical lines are the error bars, not the standard deviations). For example, at bet size of £20, the average return per race is just £0.30 with a standard deviation of about £3.00 and a standard error of £0.17.

DAFBot on horses

At that point, I had finally managed to update my new non-order-book simulator so that it could work on horse racing data, which was great, since horse markets were much more preferable to greyhound ones: they were more liquid and there was much less opportunity for a single actor to manipulate prices. Hence there would be more capacity for larger bet sizes.

In addition, given that the spreads in horses are much tighter, I wasn't worried about having a bias in my backtests (the greyhound one assumes we can get executed at the best lay, but most of its PnL could have come from the massive back-lay spread at 160s before the race, despite that I limited the markets in the backtest to those with spreads below 5 ticks).

Research

I backtested a similar strategy on horse data but, interestingly enough, it didn't work: the average return was well within the standard error from 0.

However, flipping the desired position (instead of going long the favourite, betting against it) resulted in a curve similar to the one for the greyhound strategy. In essence, it seemed as if there was an upwards drift in the odds on the favourite in the final minutes before the race. Interestingly, I can't reproduce those results with the current, much larger, dataset that I've gathered (even if I limit the data to what I had at that point), so the following results might be not as exciting.

The headline number, according to my notes at that time, was that, on average, with £20 lay bets, entering at 300 seconds before the race and exiting at 130 seconds, the return was £0.046 with a standard error of £0.030 and a standard deviation of £0.588. This seems like very little, but the £20 bet size would be just a start. In addition, there are about 100 races every day (UK + US), hence annualizing that would result in a mean of £1690 and a standard deviation of £112.

This looked nice (barring the unrealistic Sharpe ratio of 15), but the issue was that it didn't scale well: at bet sizes of £100, the annualized mean/standard deviation would be £5020 and £570, respectively, and it would get worse further on.

I also had found out that, at £100 bet sizes, limiting the markets to just those operating between 12pm and 7pm (essentially just the UK ones) gave better results, despite that the strategy would only be able to trade 30 races per day. The mean/standard deviation were £4220 and £310, respectively: a smaller average return and a considerably smaller standard deviation. This was because the US markets were generally thinner and the strategy would crash through several price levels in the book to liquidate its position.

Note this was also using bet sizes and not exposures: so to place a lay of £100 at, say, 4.0, I would have to risk £300. I didn't go into detailed modelling of how much money I would need deposited to be able to trade this for a few days, but in any case I wasn't ready to trade with stakes that large.

Placing orders below £2

One of the big issues with live trading the greyhound DAFBot was the fact that the bot can't place orders below £2. Even if it offers to buy (back), say, £10 at 2.0, only £2 of its offering could actually get matched. After that point, the odds could go to, say, 2.5, and the bot would now have to place a lay bet of £2 * 2.0 / 2.5 = £1.6 to close its position.

If it doesn't do that, it would have a 4-contract exposure to the runner that it paid £2 for (will get £4 if the runner wins for a total PnL of £2 or £0 if the runner doesn't win for a total PnL of -£2).

If it instead places a £2 lay on the runner, it will have a short exposure of 2 * 2.0 - 2 * 2.5 = -1 contract (in essence, it first has bought 4 contracts for £2 and now has sold 5 contracts for £2: if the runner wins, it will lose £1, and if the runner loses, it will win nothing). In any case, it can't completely close its position.

So that's suboptimal. Luckily, Betfair documents a loophole in the order placement mechanism that can be used to place orders below £2. They do say that it should only be used to close positions and not for normal trading (otherwise people would be trading with £0.01 amounts), but that's exactly our use case here.

The way it's done is:

- Place an order above £2 that won't get matched (say, a back of 1000.0 or a lay of 1.01);

- Partially cancel some of that order to bring its unmatched size to the desired amount;

- Use the order replacement instruction to change to odds of that order to the desired odds.

Live trading

I started a week of fully automated live trading on 2nd October. That was before I implemented placing bets below £2 and the bot kind of got lucky on a few markets, unable to close its short exposure fully and the runner it was betting against losing in the end. That was nice, but not exactly intended. I also changed the bot to place bets based on a target exposure of 10 contracts (as opposed to stakes of £10, hence the bet size would be 10 / odds).

In total, the bot made £3.60 on that day after trading 35 races.

Things quickly went downhill after I implemented order placement below £2:

- 3rd October: -£3.41 on 31 races (mostly due to it losing £3.92 on US markets after 6pm);

- 4th October: increased bet size to 15 contracts; PnL -£2.59 on 22 races;

- 5th October: made sure to exclude markets with large back-lay spreads from trading; -£2.20 on 25 races (largest loss -£1.37, largest win £1.10);

- 6th October: -£3.57 on 36 races;

- 7th October: -£1.10 on 23 races.

In total, the bot lost £9.27 over 172 races, which is about £0.054 per race. Looking at Betfair, the bot had made 395 bets (entry and exit, as well as additional exit bets at lower odds levels when there wasn't enough available at one level) with stakes totalling £1409.26. Of course, it wasn't risking more than £15 at any point, but turning over that much money without issues was still impressive.

What wasn't impressive was that it consistently lost money, contrary to the backtest.

Conclusion

At that point, I was slowly growing tired of Betfair. I played with some more ideas that I might write about later, but in total I had been at it for about 2.5 months and had another interesting project in mind. But project Betfair for now had to go on an indefinite hiatus.

To be continued...

Enjoyed this series? I do write about other things too, sometimes. Feel free to follow me on twitter.com/mildbyte or on this RSS feed! Alternatively, register on Kimonote to receive new posts in your e-mail inbox.

Interested in this blogging platform? It's called Kimonote, it focuses on minimalism, ease of navigation and control over what content a user follows. Try the demo here or the beta here and/or follow it on Twitter as well at twitter.com/kimonote!